Semantic & Vector Search

Keyword search breaks down the moment users describe intent rather than exact terms. Semantic and vector search bridges that gap by understanding meaning, not just matching tokens. We design and implement dense vector retrieval pipelines on top of Elasticsearch's native kNN capabilities — from embedding model selection and ingest pipeline instrumentation through to production-grade hybrid search with measurable relevance uplift.

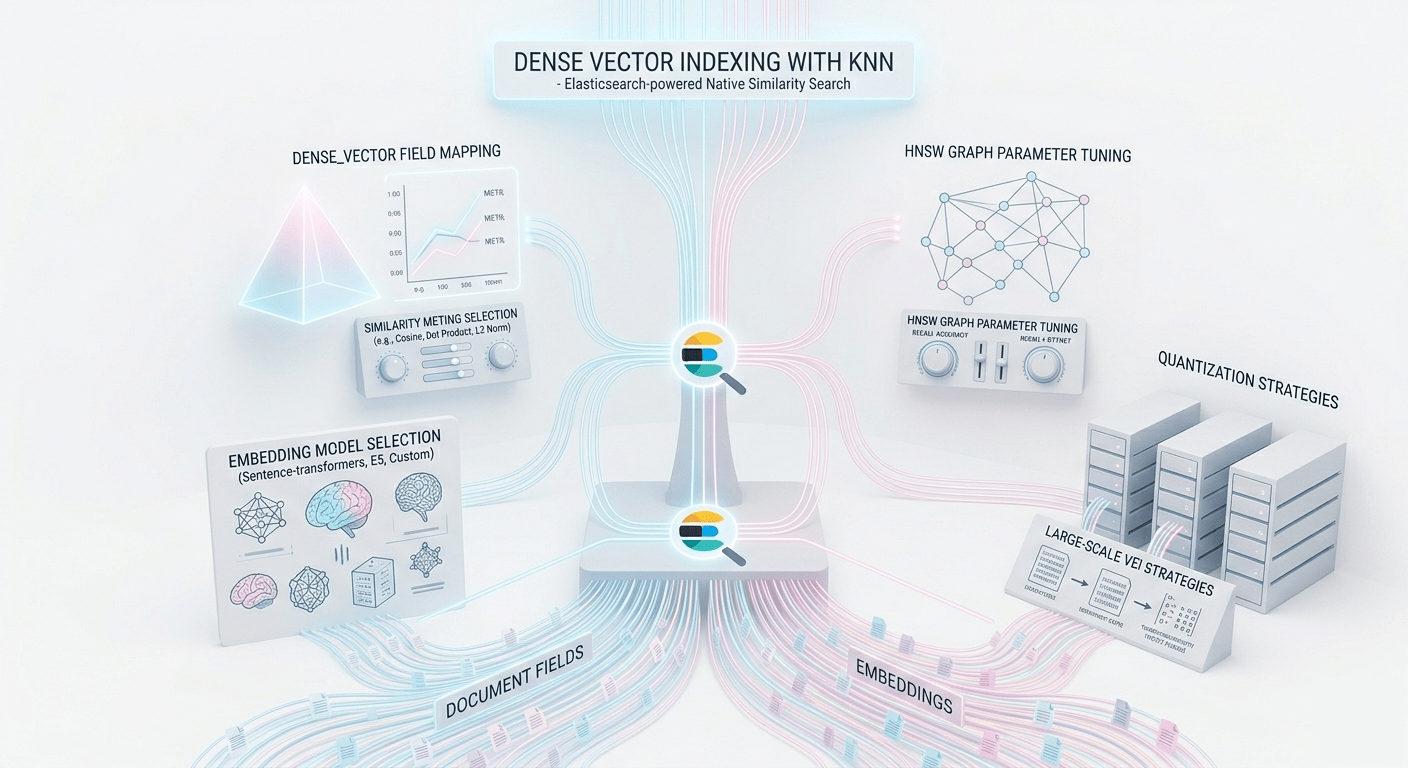

Dense Vector Indexing with kNN

Elasticsearch's native approximate kNN search stores dense vector embeddings directly alongside your

document fields, enabling similarity queries without an external vector database. We configure

your dense_vector mappings, select appropriate similarity metrics (cosine, dot product,

L2 norm), and tune the HNSW graph parameters — num_candidates and ef_construction

— to balance recall accuracy against query latency for your specific data distribution.

-

dense_vector field mapping and similarity metric selection

-

HNSW graph parameter tuning for recall/latency trade-off

-

Embedding model selection (sentence-transformers, E5, custom)

-

Quantization strategies for large-scale vector indices

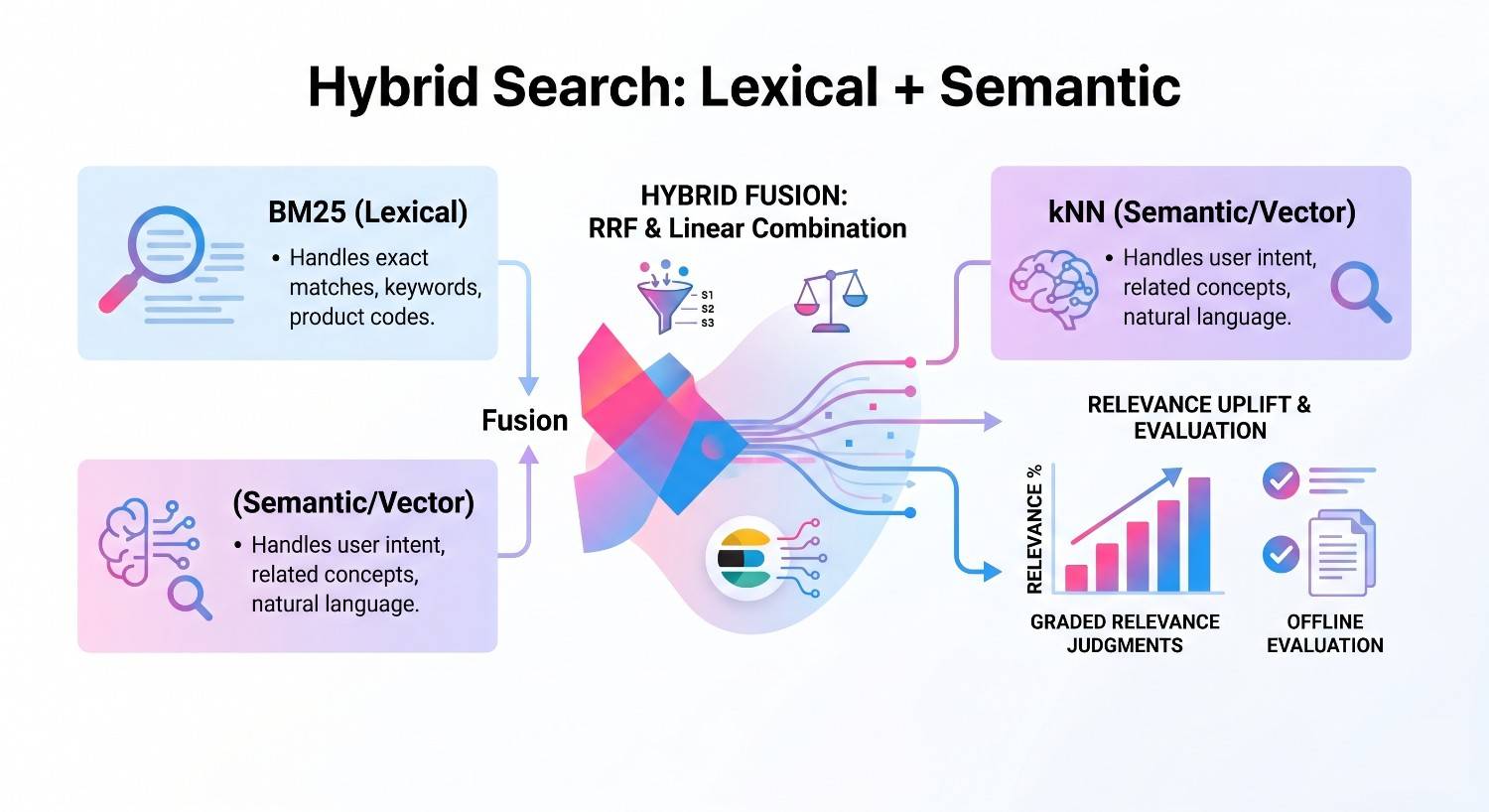

Hybrid Search: Lexical + Semantic

Pure vector search misses exact matches that users expect. Pure keyword search misses semantic intent. The state of the art is a hybrid approach that blends BM25 and kNN signals at query time. We implement Reciprocal Rank Fusion (RRF) and linear combination strategies, then measure the relevance uplift against your judgment lists. The result is a retrieval system that handles both "find exactly this" and "find something like this" queries with equal confidence.

-

Reciprocal rank fusion (RRF) implementation and tuning

-

Score normalization and weighted linear combination

-

Query-time retrieval strategy selection by query type

-

Offline evaluation against graded relevance judgments

HOW CAN WE HELP?

CONTACT US

Search API Consultants

At Your Service

Providing tailored API solutions for clients to drive innovation, enhance scalability, and achieve success in the digital age. Let us help you unlock the full potential of your APIs.