Monitoring & Observability

Modern applications generate metrics, logs, and traces simultaneously — and correlating them across services and infrastructure is what separates fast incident resolution from hours of manual investigation. We build observability platforms on Elasticsearch that unify all three signals in a single searchable store, enabling your SRE and DevOps teams to move from alert to root cause in minutes rather than hours. Every implementation is designed for production data volumes from day one.

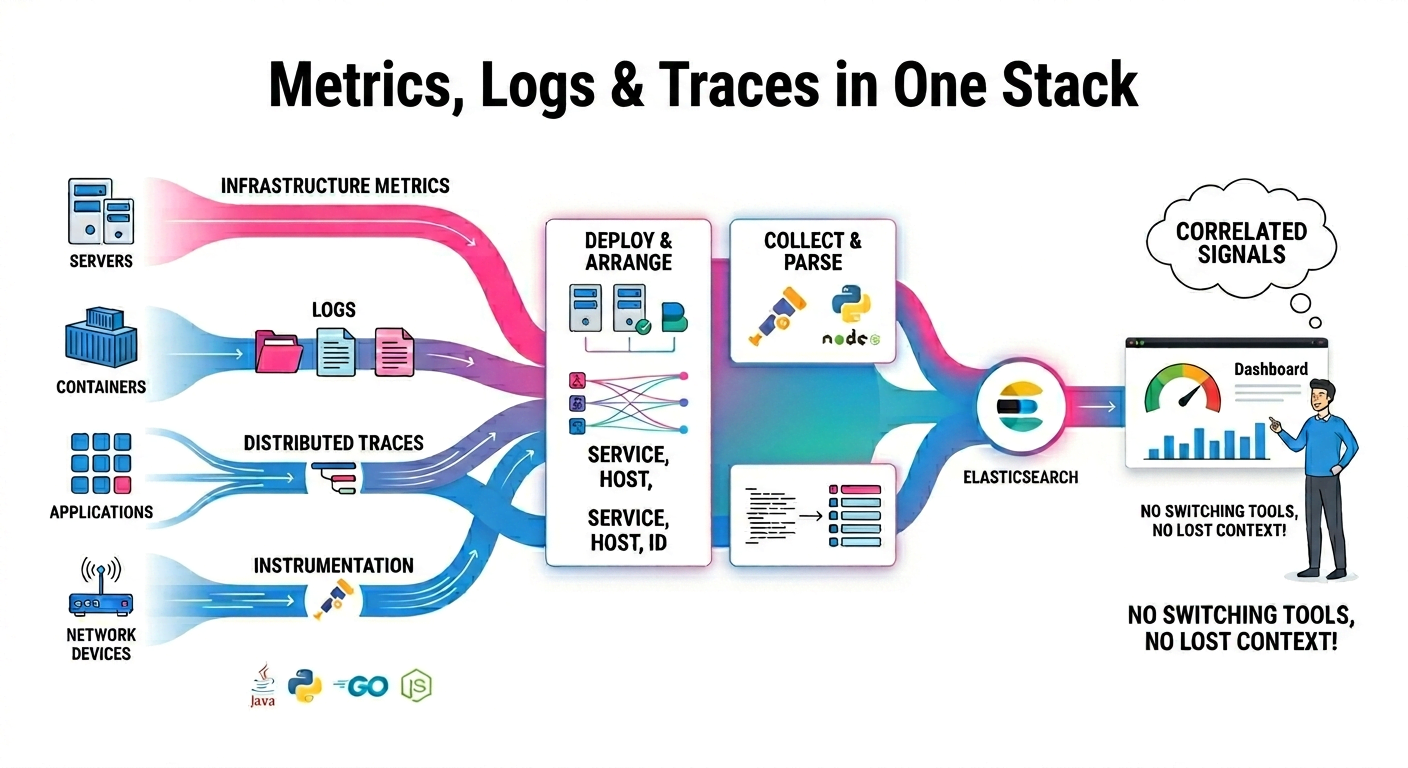

Metrics, Logs & Traces in One Stack

We implement the Elastic Observability stack end-to-end: Metricbeat and Elastic Agent for infrastructure metrics, Filebeat for structured and unstructured log collection, APM Server for distributed tracing, and OpenTelemetry collectors as a vendor-neutral instrumentation layer. All signals are correlated by service, host, and trace ID so engineers can pivot from a slow trace to the correlated logs and the host metric spike that caused it — without switching tools or losing context.

-

Metricbeat, Elastic Agent, and Filebeat deployment and configuration

-

APM Server setup with automatic correlation across signals

-

OpenTelemetry collector integration for language-agnostic instrumentation

-

Structured log parsing and field extraction at ingest time

Scaling & Data Retention Strategy

Observability data is high-cardinality and high-volume — a medium-sized application can generate tens of gigabytes of logs per day. Without deliberate retention management, storage costs grow without bound while query performance degrades on over-full shards. We design ILM policies that automatically transition data through hot, warm, cold, and frozen tiers, keeping recent data fast and historical data accessible at a fraction of the cost through searchable snapshots. Rollover thresholds are calibrated to your ingest rate so shards stay within the size range Elasticsearch handles efficiently — typically 10–50 GB depending on query patterns.

Custom Kibana Dashboards & Alerting

We design Kibana dashboards your team will actually use — purpose-built for your SLO targets, infrastructure profile, and on-call workflows.

-

SLO/SLA error budget dashboards with burn rate alerting

-

ML-based anomaly detection on metric and log streams

-

Deployment event correlation with service error rates

-

Alert routing to PagerDuty, Opsgenie, or Slack

-

Runbook links embedded in alert payloads for faster triage

HOW CAN WE HELP?

CONTACT US

Search API Consultants

At Your Service

Providing tailored API solutions for clients to drive innovation, enhance scalability, and achieve success in the digital age. Let us help you unlock the full potential of your APIs.